“It was a really unique experience and beneficial because I got to know a lot more about the inner workings of AI and what issues are currently present with it.”

Why does a joke lose its punchline when it’s translated by artificial intelligence?

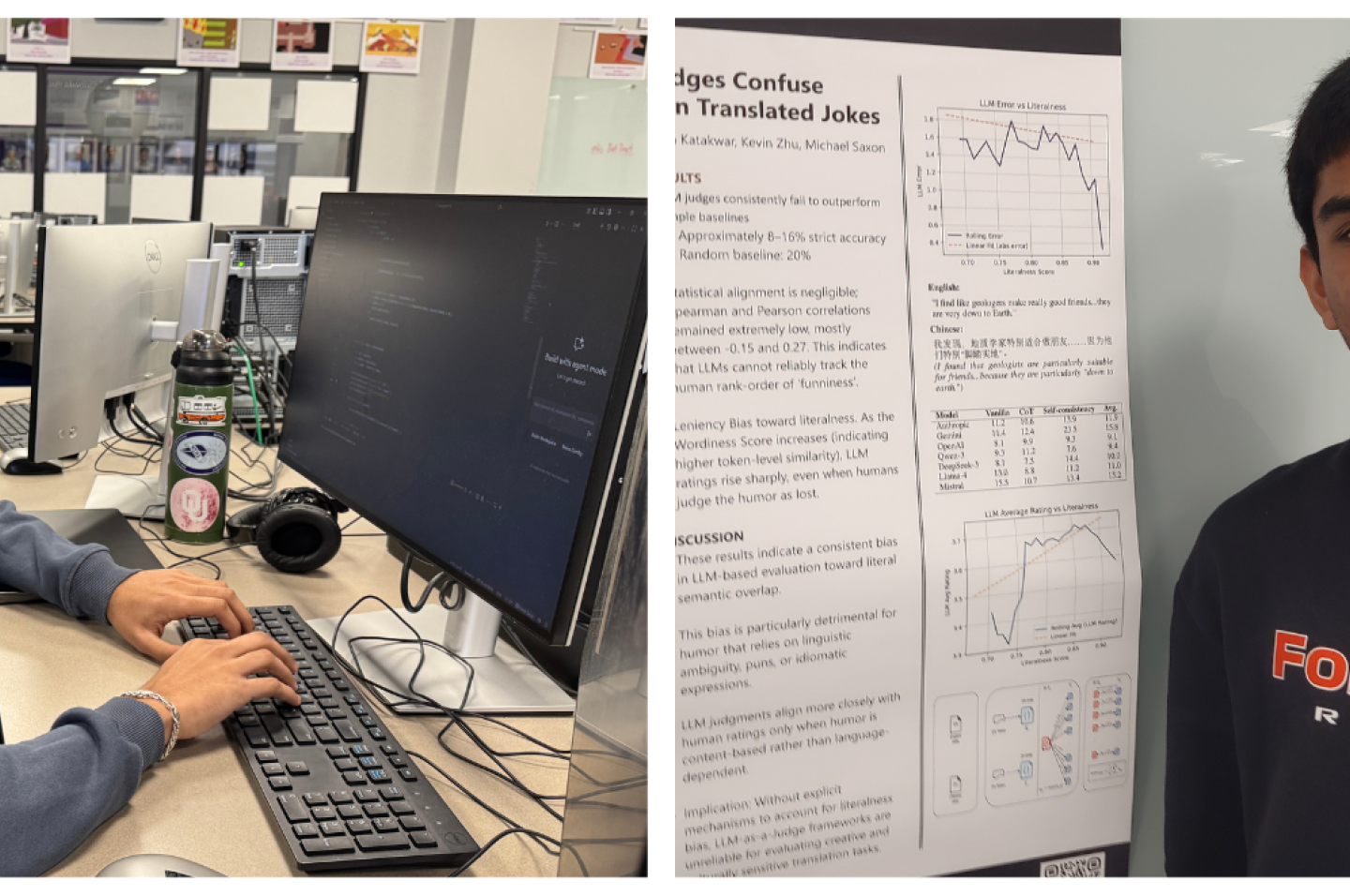

That question became the foundation of a research project led in part by Devansh Katakwar, a student in Francis Tuttle Technology Center’s Computer Science Academy on the Rockwell Campus.

Through Algoverse, a program that pairs high school students with graduate-level mentors to conduct AI-related research, Katakwar and a group of peers wrote a formal research paper. After initially considering a music-related topic, they pivoted to joke translation.

Katakwar found the program through a Google search for computer science opportunities for high school students. The Deer Creek High School senior applied and was accepted into the summer cohort of students from around the world.

The paper, titled “Not Funny Anymore,” examined how artificial intelligence models handle jokes translated between languages. Using a dataset of jokes, the team translated them into various languages and asked multiple AI models to evaluate and translate them. What they discovered was that AI struggled significantly with cultural context.

“Throughout the experiments, we found that AI is not very good at picking up cultural context in humor translation,” Katakwar said. “If we ask it to translate the joke back into English, it’s not very good at making it funny, and it’s not very good at picking up why it’s funny.”

The team concluded that future AIs need to have more cultural data in their training datasets. Katakwar explained that AI systems rely on an “embedding space,” which he compared to a 3D map. Whenever someone types a word into the system, it splits into tokens, which are then mapped in relation to one another. Some tokens, like mother and daughter, would be very close together. But mother and taco would be far apart, he said.

While related terms cluster closely together, cultural nuances may not be positioned in a way that allows AI to interpret humor effectively. The group suggested that future models should better organize cultural context and cues so they are closer together, making it easier for AI to pick them up.

The paper was accepted to the AAAI Conference on Artificial Intelligence. Katakwar and his team were set to travel to Singapore in January to present at the conference, but winter storms canceled the trip. They are planning to submit to other conferences as well and hope to have the opportunity to present their research.

“It was a really unique experience and beneficial because I got to know a lot more about the inner workings of AI and what issues are currently present with it,” Katakwar said. “It was a really good experience that I’m grateful to have.”